The Short Version

- Every marketing leader today is asking one of five questions about how their company is showing up in AI. Every free tool in the market is trying, with varying degrees of honesty, to answer one of them.

- We matched 28 free and freemium tools against four transparency questions. Six scored 4/4. Ten fell into a partial-credit middle. Twelve are confidence theater. The gap is the story.

- The honest ones will tell you where you have a problem. None of them, alone and free, will reliably tell you what to do about it.

- Download the full cheatsheet and keep it handy for the next time your VP of Marketing asks, “Is there a tool we can use to quickly see if we’re visible to ChatGPT?”

Where we are, and why this piece exists

Early in April, a GoodFirms study showed that while 89% of brands are already appearing in AI-generated search answers, only 14% of marketers currently track what those answers say. That same study found 43% of marketers naming AI search optimization as a core 2026 strategy. Budget is moving into the channel three times faster than measurement. That is not a surprising number to any SEO I’ve asked.

As marketers at every level in every company, we’ve come to depend on the over-abundance of user data we can glean online, and this new AI platform “black hole” is frustrating us to no end.

And we all know where there is frustration, there is money to be made.

The number and variety of new AI-based analytics tools launched in the last 18 months is dwarfed only by the “free AI visibility tools” launched in the last two months.

The signal-to-noise ratio around these tools has gotten so high, this series was created to help make sense of it all. In Part 1 we covered some of the highest-signal research being done and shared by the leaders of the SEO community.

This piece does the inventory work. We took a look at 28 free and freemium tools from three different angles:

- Which of the top five questions marketers have about AI is the tool trying to answer

- Is the tool being transparent about how it is answering the question

- How well does it do its stated job — is it giving you facts, vibes, or wrong answers

Before we look at how the tools stack up in these three angles, one note on method.

Our methodology

We studied 28 free and freemium tools and came out the other side with a way of deciding which one matches the question you are trying to answer.

There are five question buckets we’re all asking about our sites and how they’re seen by AI:

- Are we showing up in AI results at all?

- When buyers ask AI about my category, do we come up?

- How do we stack up against competitors?

- When AI describes my brand, does it get the facts right?

- Is my site technically set up for AI to find and read me?

We evaluated each tool against whatever its vendor makes publicly visible: homepage claims, sales-page methodology notes, pricing pages, free-tier output, help docs. We did not get private demos or sales briefings.

For every tool in our sample we asked these four transparency questions:

- Source transparency. Where does the data come from? Is the data source named, and can you verify it exists independently of the tool’s dashboard?

- Methodology transparency. How is the score computed? Is the formula, weighting, or scoring logic visible somewhere a customer can read without booking a call?

- Reproducibility. Run the same check twice, get the same answer? If the answer drifts, is the drift explained (refresh cadence, LLM non-determinism, query rotation)?

- Actionability. Does the output point you at a specific thing you can fix or change? Or does it stop at “your score is 42”?

Each tool got a checkmark or a blank for each question. The scoring is binary on purpose: either the information is visible to the customer or it is not.

If you remember only one thing from this piece, remember these four questions. Apply them to any AI visibility tool you evaluate for the rest of this year, and 90 percent of the evaluation work is already done.

Angle 1 — The five questions you are actually asking

When a VP or CMO walks up to your desk to talk about AI visibility, she is asking one of five questions. The questions sound very similar, but the answers she’s trying to get at are usually very different. Just like your traditional SEO tools, if you can get just a little more specific about the question you’re asking, you can be a little more confident about the answer your tool gives. Every tool in our sample is really trying to answer one of those five.

Question 1: Are we showing up in AI results at all?

This is the first question, and the one most VPs actually want a two-second answer to.

This is the biggest bucket in our sample. Tools that sit here (shown alphabetically) all claim to hand you a single 0–100 number for “your AI visibility.”

- Adamigo AI Search Grader

- AEO Grader (aeograder.org)

- GoVISIBLE

- Gushwork AI Search Grader

- HubSpot AEO Grader

- Insites

- LLMClicks AI Visibility Checker

- LucidRank

- Mangools AI Search Grader

- ProductRank

- SE Ranking

- Semrush free AI Visibility checker

- Wordlift AI Audit

Question 2: When buyers ask AI about my category, do we come up?

This is the question the VP asks on the walk back to her office, after the quick-score check came back low.

This is the synthetic-prompt family. These tools run a structured set of prompts against the major AI platforms and report whether your brand comes up and how often.

Question 3: How do we stack up against competitors?

This is the question the CMO’s boss asks in the QBR.

This bucket is almost entirely the enterprise platforms we all already know and use. All of them are custom-priced and sales-gated. None are truly free tools — they are sales demos wearing a free-tool costume.

Question 4: When AI describes my brand, does it get the facts right?

This is the quieter fourth question, and the one that matters most when the answer is “no.”

This near-empty bucket is itself a finding. Most free tools will happily tell you whether you appear in AI answers. Almost none will tell you whether the AI is right about you.

- Ahrefs Brand Radar

- Signal Check (full disclosure, this is my tool)

Question 5: Is my site technically set up for AI to find and read me?

This is the question that should be asked first and almost never is.

Every tool in this bucket checks observable signals in your site’s code — schema validity, heading hierarchy, robots.txt, canonical URLs — against published rules.

- Google Rich Results Test

- SchemaValidator.org

- Web Aloha AI Readiness Checker

- Wordlift Structured Data Audit

Angle 2 — How transparently each tool gets to its answer

Every tool in the sample takes one of four approaches to answering its chosen question. There is no inherently right or wrong approach; they are just different, and each has tradeoffs. The failure mode is not which base a tool uses; the failure mode is opacity about the base.

Synthetic base. Tools that run a fabricated set of prompts against one or more AI platforms and report what comes back. Fabricated prompts in, brand mentions out.

- Advantage: you can test thousands of queries, benchmark against competitors, and rerun the same prompt set to see drift.

- Limitation: the prompt set is always a sample of what real buyers ask, never the thing itself.

Real base. Tools that measure observable behavior — actual AI-platform user prompts, server logs, citation tracking, real referral data.

- Advantage: what you measure is closer to what your buyers actually did.

- Limitation: AI platforms strip referrer data most of the time, so these tools miss every exposure that did not produce a click.

Code base. Tools that read your site’s own code; its schema markup, structured data, HTML signals, robots.txt, etc. and validate it against published rules. No AI prompts involved.

- Advantage: deterministic and reproducible; rerun the test, get the same answer.

- Limitation: tells you your site is technically fit for AI to read you, not whether AI is actually reading you.

Unknown base. Tools that present a confident score without disclosing what their data source is or how the score is built. Their methodology is not visible at the public-page level. The base may be synthetic, may be real, may be a random number generator from the customer’s chair, you cannot tell.

Here is the full inventory, ordered by their final transparency score (top to bottom), alphabetical within each tier, with the base each tool uses flagged:

4/4 — the transparent six

- Google Rich Results Test — code base — 4/4

- Gumshoe — synthetic base — 4/4

- LLMClicks AI Visibility Checker — synthetic base — 4/4

- SchemaValidator.org — code base — 4/4

- Web Aloha AI Readiness Checker — code base — 4/4

- Wordlift Structured Data Audit — code base — 4/4

3/4 — transparent with one calibrated gap

- Ahrefs Brand Radar — real base — 3/4

- Signal Check (full disclosure, this is my tool) — synthetic base — 3/4

2/4 — partial transparency

- Insites — unknown base — 2/4

- LLM Pulse — synthetic base — 2/4

- LucidRank — synthetic base — 2/4

- Otterly — synthetic base — 2/4

- Peec AI — synthetic base — 2/4

- SE Ranking — unknown base — 2/4

- Semrush free AI Visibility checker — unknown base — 2/4

- Wordlift AI Audit — unknown base — 2/4

0–1/4 — confidence theater

- Adamigo AI Search Grader — unknown base — 0/4

- AEO Grader (aeograder.org) — unknown base — 0/4

- ArcAI — unknown base — 0/4

- BrightEdge — unknown base — 0/4

- Conductor — unknown base — 0/4

- GoVISIBLE — unknown base — 0/4

- Gushwork AI Search Grader — unknown base — 0/4

- HubSpot AEO Grader — unknown base — 1/4

- Mangools AI Search Grader — unknown base — 0/4

- ProductRank — unknown base — 0/4

- Profound — unknown base — 0/4

- seoClarity — unknown base — 0/4

The bottom line on transparency

At first glance you may ask: why does scoring well or badly on transparency matter? It’s a free tool. But, just like any analytics data, if you can’t trust the data that comes out, why bother asking in the first place.

Eight tools (29%) scored well on transparency measure something real and tell you what it is. Most of them are smaller than the enterprise platforms making the loudest noise. That is not a coincidence. Because of their pricing structures, the enterprise tools were at a clear disadvantage from the start, but “the scrappy startup” story has been around as long as the Internet.

Unfortunately, 21 of the 28 tools (75%) present a confident score with no visible methodology.

They disclosed no source for the data, nor any explanation of how the score is computed. You are left with no way to verify the finding.

You paste a URL, you get a number, and the number arrives inside a confident-looking dashboard with brand colors and a recommended next step, which is usually to book a demo.

Angle 3 — Fact, vibe, or wrong: the verdict

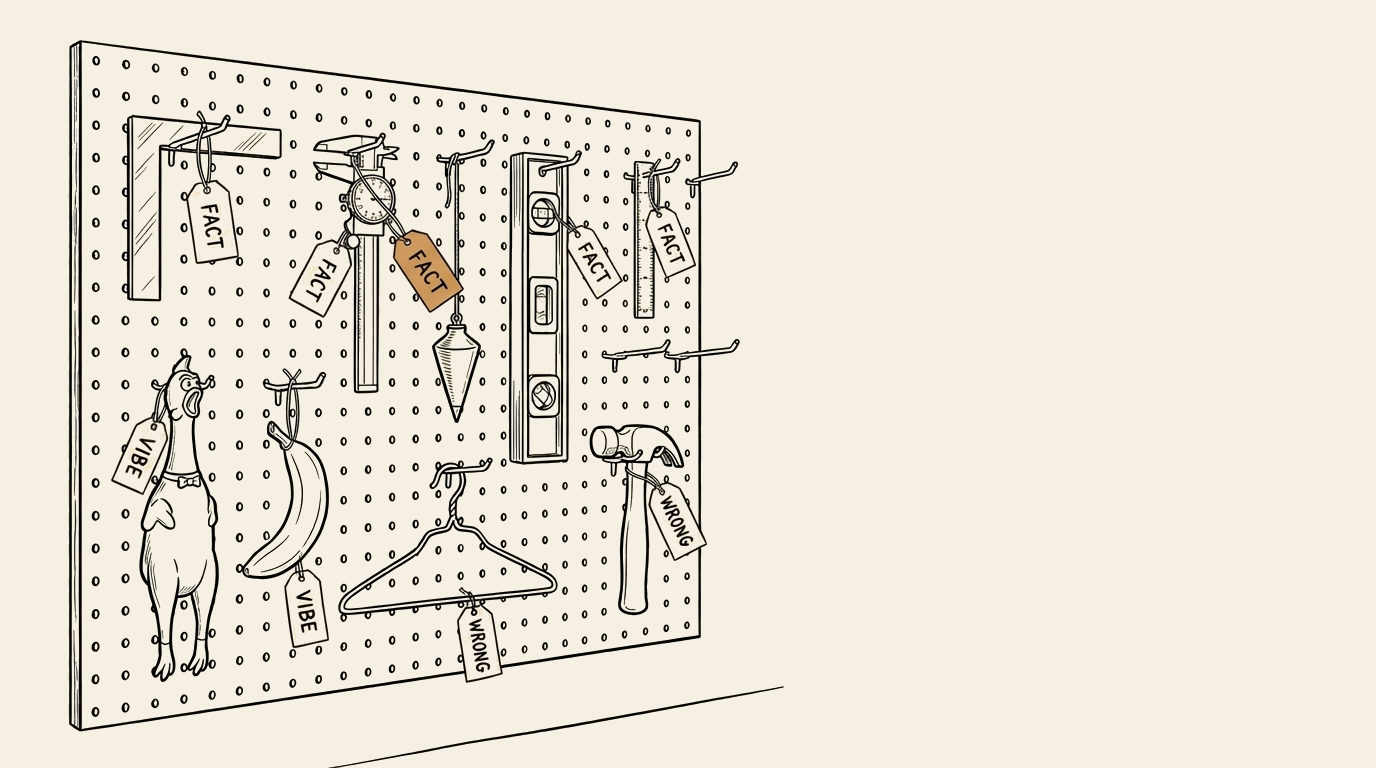

If you read the Find, Understand, and Trust framework that underlies my work with clients, you will recognize our measurement categories: Fact, Vibe, and Wrong. They apply here too.

A Fact tool measures a real, verifiable thing. Rerun the same test and the result is the same. Cross-check with an independent method and the answer lines up. Fact tools are deterministic — their inputs are code, not language models.

- Google Rich Results Test

- SchemaValidator.org

- Web Aloha AI Readiness Checker

- Wordlift Structured Data Audit

A Vibe tool asks an LLM a question, or wraps its output in an opaque score, or samples a tiny slice and calls it a population. A Vibe tool can be honest about why its answer is imprecise, and the honest ones are useful — the direction of the answer is often informative, the trend over time is often informative. The specific number is noise dressed up as signal.

A tool can be a Vibe tool for three different reasons. We’ve tagged each one.

- Drift — the tool calls LLMs and LLMs answer differently on rerun.

- Opaque scoring — the source is disclosed but the score formula is hidden, so no outside party can reconstruct how the number was built.

- Sample limit — the tool measures a small, unrepresentative slice (five prompts, one platform, one trial window) and presents it as a confident population-level score.

- Ahrefs Brand Radar — sample limit

- Gumshoe — drift

- Insites — opaque scoring

- LLM Pulse — drift

- LLMClicks AI Visibility Checker — drift

- LucidRank — opaque scoring

- Otterly — drift

- Peec AI — drift

- SE Ranking — opaque scoring

- Semrush free AI Visibility checker — opaque scoring

- Signal Check (full disclosure, this is my tool) — drift

- Wordlift AI Audit — opaque scoring

A Wrong tool presents a confident score with no visible methodology, or measures a thing that does not actually map to the claim on the box. The “AI search visibility score” that will not tell you which queries it ran, which platforms it tested, or how the score was computed — that is Wrong by definition. Not because the tool is malicious, but because the customer has no way to defend the number to anyone, which means the number is marketing.

- Adamigo AI Search Grader

- AEO Grader (aeograder.org)

- ArcAI

- BrightEdge

- Conductor

- GoVISIBLE

- Gushwork AI Search Grader

- HubSpot AEO Grader

- Mangools AI Search Grader

- ProductRank

- Profound

- seoClarity

Facts - 4, Vibes - 12, Wrong - 10. The distribution is what you should expect from a category this young — the signal lives at the smaller, scrappier end, and the loudest vendors are the least inspectable.

The hidden cost of “free”

Free tools are not free. The cost is paid in one of three forms, and which form matters.

Email capture for nurture sequences. Roughly 45% of the free tools we tested follow this pattern. You enter a URL and an email to see the result. The score arrives, and so does a nurture sequence of blog posts, webinar invitations, and eventually a sales email. Unlike the 0/4 scoring lead-gen tools, this group usually offers reasonable value in exchange for the email. HubSpot’s AEO Grader, Insites, GoVISIBLE, Wordlift AI Audit, and Semrush’s free AI Visibility checker all fall into this bucket.

Sales-call required to see the full result. Roughly 31% of tools. The free tier shows you enough to confirm there is a problem and not enough to act on. Profound, BrightEdge, Conductor, seoClarity, and the short-trial tools (Peec AI’s 7-day trial, LLM Pulse’s 14-day trial) all use some version of this pattern. The cost here is time — yours and your team’s — spent on a qualifying conversation before you can see the data.

Eventual paid tier required. Roughly 24% of tools. Substantive free tier, clear paid tier, no qualifying call in between. Gumshoe (3 free reports), LucidRank, SE Ranking, Wordlift, LLM Pulse at its subscription, and Peec AI all fit here. This is the honest model. The free tier delivers real value and teaches you whether you want the paid tier. If the product is real, the upsell is consensual.

Match the cost model to the transparency score and a pattern emerges. The 4/4 tools are almost all in the “truly free” or “honest upsell” categories. The 0/4 tools are almost all in the “email capture” or “sales call required” categories. Transparency and cost discipline travel together.

Part 3 of this series picks up here where the paid tool stacks that actually help with the fixing live.

Time to adjust your expectations

Here is the part most free-tool reviews do not say out loud.

All of these tools, even the four that scored Fact, can only help you identify whether you have a problem in the area they measure. None of them, alone and for free, will reliably tell you what to do about it.

- Google Rich Results Test will tell you your schema has errors. It will not write the schema.

- Web Aloha will tell you your authorship signals are weak. It will not fix the byline structure across your CMS.

- Gumshoe will tell you ChatGPT is describing you inaccurately. It will not rewrite your About page.

- Signal Check will tell you there is a gap between what the AI platforms say about you and what your industry leader says. It will not close the gap.

If you use these tools as diagnostic instruments — instruments that read a signal, return a verdict, and point you at an area to investigate — they will serve you well.

If you use them as prescription instruments, you will be disappointed, and worse, you will probably paper over the disappointment with a score you share with leadership as if it were an answer.

It is worth stating the warning directly: a 73/100 AI visibility score you put in a board deck is not measurement. It is decoration. If someone on your team asks “what is that number actually measuring?” and you cannot answer past “the tool said so,” the number is costing you credibility, not earning it.

Take the help free tools can honestly give. Use them to identify where the problem lives. Then resolve to do the work of fixing it, or hire people whose job is to fix it. Do not substitute a score for a strategy.

What comes next

Part 3 is where the tool stacks that actually help with the drilling and fixing live. We will cover:

- What a working AI visibility stack looks like for the two buyer tiers that cover most of the B2B landscape: the validation-and-awareness buyer who needs a number she can walk into a board meeting with, and the diagnosis-and-implementation buyer who needs to know which specific pages are missing and what to do about them.

- When it makes sense to graduate from free tools to paid ones, and what to ask before you sign a contract.

- How to combine synthetic-prompt data, real-world signal data, and diagnostic fix lists into a monthly measurement rhythm your team can actually sustain.

Until then, keep the cheatsheet on your desktop. It is this piece as a single-page scan, grouped by the five questions. Share it with anyone you are comparing notes with. Community helping community is how we collectively keep the signal-to-noise ratio on the good side of this landscape.

Part 3 (coming soon): How to combine free and paid tools into a working AI visibility stack. Includes two buyer scenarios drawn from the research and tool recommendations by role.