The short version:

- Half of all Google searches now trigger an AI Overview, but the industry can't agree on what to call the work required to show up in them.

- The terminology confusion (GEO, AEO, AI SEO, AIO) isn't just annoying. It's slowing down the business decisions that actually matter.

- The fundamentals haven't changed as much as the acronyms suggest. A framework most marketers already know translates directly into this new context.

Something Shifted, and We're Still Arguing About What to Call It

Google's March 2026 Core Update completed April 8 and the results were stark. Sites relying on mass-produced AI content saw traffic drop 71%. Sites built on original data rose 22%.

That's good news for all of us who are tired of watching "AI slop" win.

At the same time, ChatGPT, Perplexity, Claude, and Gemini are answering questions about your business right now, pulling from sources they chose for reasons no one knows. ALM Corp reported in April 2026 that 89% of brands appear in AI citations, but only 14% actively track them.

That's bad news for the companies who are stuck trying to decide what is deemed "valuable content" in this new Internet. Compounding the problem is the fact that there are few clear analytics tools and even fewer analytics benchmarks to guide them.

The Acronym Problem

Search any marketing publication right now and you'll find at least four terms for roughly the same thing:

- GEO (Generative Engine Optimization): Coined by researchers at Princeton, Georgia Tech, and IIT Delhi in a 2024 academic paper. Technically precise. Almost nobody outside SEO practitioners uses it.

- AEO (Answer Engine Optimization): The term was first used to distinguish between AI chat engines and AI web search. Broader adoption than GEO, but still niche.

- AI SEO or AI Search Optimization: What most marketers actually type into Google when they're trying to figure this out. High search volume, but vague enough to mean almost anything.

- AIO (AI Overviews): This one sometimes refers specifically to the AI Overviews and AI Mode answers that appear in Google search results, it is sometimes applied to Google's Gemini AI as well.

These aren't just different labels for the same thing. Each carries assumptions about what matters most: the AI platform, the tactic, or the outcome.

The result is that a VP of Marketing searching for help understanding what's happening to his site traffic ends up more confused after researching than before. That's a problem for the whole industry, not just one company.

Old Language, New Things (and Vice Versa)

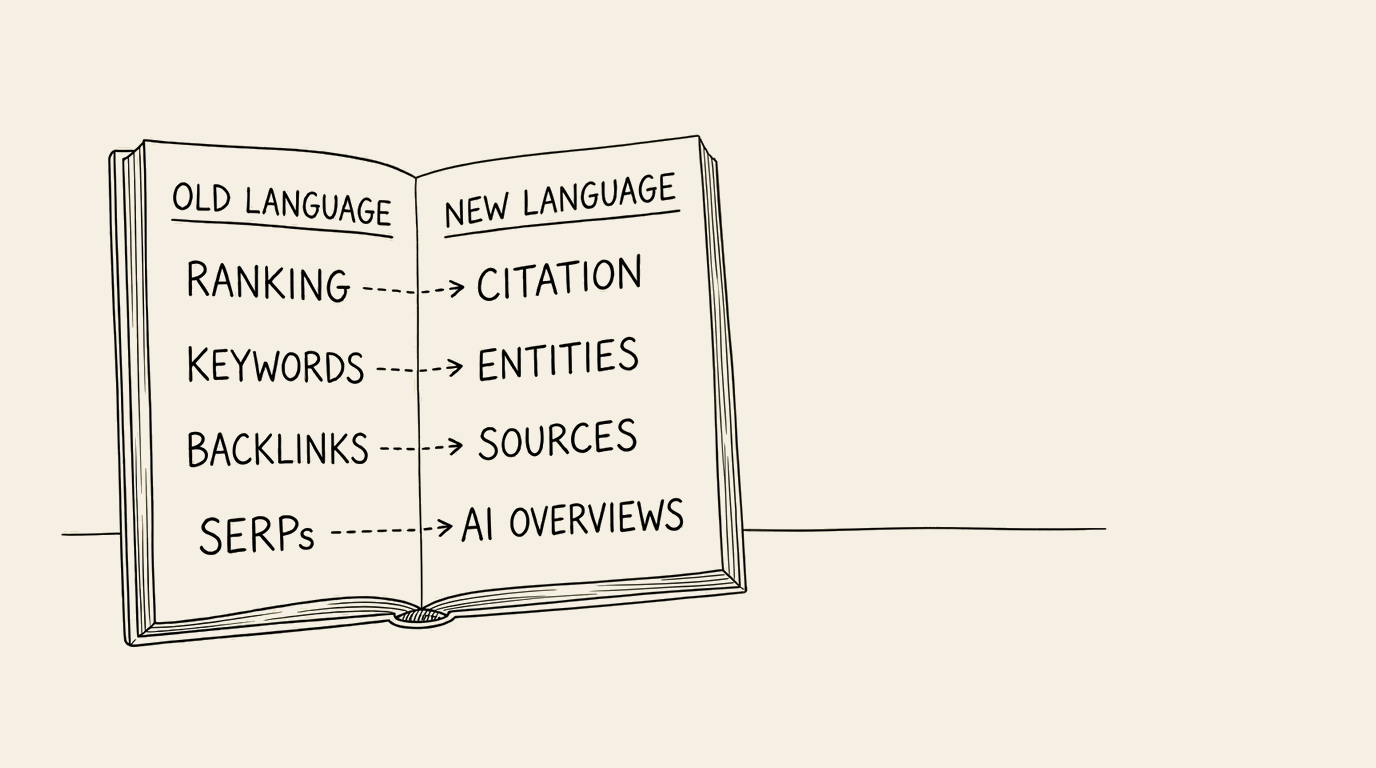

Part of the confusion comes from a pattern that repeats every time technology shifts. We take familiar words and stretch them to cover unfamiliar territory.

"SEO" originally meant optimizing for search engine crawlers and ranking algorithms. Now people use "AI SEO" to mean optimizing for large language models that don't rank pages at all; they cite sources. That's a fundamentally different behavior, but we're using the same three-letter abbreviation.

Going the other direction, new terms are being applied to practices that aren't actually new. The same levers used for "AI Optimization" are the ones that have been fueling SEO best practices for decades.

The terminology mess creates two real business problems:

- Teams can't align on priorities. When the SEO manager, the content director, and the CTO are each reading different articles using different terms, they end up building different mental models of the same problem. Budgets stall. Projects get scoped wrong.

- Vendors exploit the confusion. Every agency with an SEO practice now offers "AI optimization" as a service line. Some are doing meaningful work. Some are repackaging existing technical SEO audits with new slide decks. Without shared definitions, buyers can't tell the difference.

What Actually Changed (And What Didn't)

Here's where it helps to separate the genuinely new from the relabeled.

What's new:

AI platforms choose sources to cite, not pages to rank. That changes the economics entirely. ALM Corp found that the first source cited in an AI Overview captures 47% of clicks. The second gets 23%. The third gets 14%.

If most of your site content loads through JavaScript, four out of six AI crawlers see a blank page. Not thin content. Nothing at all. Vercel confirmed this across more than 500 million GPTBot fetches: zero evidence of JavaScript execution.

Content has always gone stale, but now the penalty is measurable. Digital Bloom studied more than 7,000 AI citations and found that content published or updated within the past 30 days gets 3.2x more citations than content 90 days old.

Google's rankings used to determine who got cited everywhere. Not anymore. Only 38% of AI Overview citations now come from sites in Google's traditional top 10, down from 76% in 2024. AI platforms are building their own authority models.

What didn't change:

Your website still needs to be technically accessible to crawlers. The crawlers are different, but the principle is the same.

Content quality still determines whether you get found. In a 2024 study by Princeton University, a 41% lift in citations was seen when content included specific statistics with named sources. That's not a new idea. It's "write better content, cite your sources" with a measured uplift.

Authority still matters, just measured differently. Backlink counts used to be the proxy. Now it's entity consistency: does the same accurate information about your business appear across multiple authoritative sources? Authoritas tested this directly and found that a few comprehensive, accurate representations outperform many shallow mentions.

The common thread: the fundamentals of being found, understood, and trusted online haven't been replaced. They've been translated into a new context with new tools, new crawlers, and new economics.

And some things that are old are truly new again.

Schema markup, for example, has existed since 2011. It was once a "nice to have" meant to garner stars and rich snippets. Then Microsoft's Fabrice Canel took the stage at SMX Munich in 2025 and said what many of us had suspected: schema markup directly feeds Bing's LLMs. It's not optional anymore. It's how AI agents understand your content.

I've been trying to train myself to say "structured data" instead of "schema" to distinguish this as a new need, not an old want. But it's hard and the old words come out all too often, but I'll keep working at it.

A Framework That Translates

Fortunately, when you dig down to the next layer, you'll find a lot of familiar tools are being used to help everyone through this transition.

Every SEO practitioner learned the three-legged stool: technical SEO, on-page SEO, off-page SEO. That framework still works. It just has a new translation.

| Traditional SEO | AI Visibility | The Question It Answers |

|---|---|---|

| Technical SEO (crawlability, speed, structure) | FIND | Can AI platforms access and read your content? |

| On-Page SEO (content quality, keywords, relevance) | UNDERSTAND | Does AI correctly interpret what your business does? |

| Off-Page SEO (backlinks, authority, reputation) | TRUST | Does AI trust you enough to cite you? |

Think of it as the same stool, built for a different room. The legs still need to be balanced. And just like traditional SEO, the weakest leg determines how far you get.

The difference: traditional SEO is a ranking game. AI visibility is a citation game. You don't need to be result #1; you need to be the source the AI quotes when someone asks about your category.

We'll be going deeper into each of these layers in upcoming posts, because each one has its own problems, tools, and fixes. But the starting point is recognizing that you don't need to learn a new discipline from scratch. You need to translate what you already know.

Why the Terminology Matters for Your Business

This isn't an academic debate. The language problem has practical consequences.

If your organization thinks "AI SEO" is just regular SEO with a new label, you'll underinvest. You'll miss the JavaScript rendering gap, the entity identification and consistency requirements, and the freshness signals that didn't matter before.

If your organization thinks AI optimization is an entirely new discipline requiring entirely new expertise, you'll overinvest in the wrong places. You'll hire specialists before you've fixed the basics, or buy tools that solve problems you don't have yet.

The productive middle ground is recognizing that this is a transition, not a revolution. The foundations are familiar; the applications are new. The window for early movers is real: AI platforms increasingly rely on their own previous citations, creating a feedback loop that favors the businesses that get their foundations right first.

We audited 120 B2B professional services websites to understand where firms actually stand in this transition. The findings were consistent: most companies have the raw material (good content, established brands, real expertise) but haven't translated it into a format AI platforms can access, interpret, or trust.

The terminology will eventually settle. The business decisions can't wait for it.

Where to Start

Three questions can tell you how far along your organization is in this transition:

- Can AI platforms actually reach and read your website? Test your key pages with JavaScript disabled. Check your robots.txt for AI crawler blocks. If the answer is no, nothing else matters until you fix that.

- When AI describes your business, is it accurate? Ask ChatGPT, Perplexity, Claude, and Gemini about your company. If the answers are generic or wrong, your content isn't structured for AI comprehension.

- Does AI cite you when someone asks about your category? Search for your core topics across multiple AI platforms. If competitors appear and you don't, you have a trust gap.

These three questions map directly to Find, Understand, and Trust. They also map directly to the three-legged stool you already know. The framework isn't new; the application is.

Wondering where your firm stands?

Our Signal Check shows you how AI platforms describe your company today, in under 60 seconds. Try it free at lizmicik.com/signal-check